FaceReader

The world’s first tool capable of automatically analyzing facial expressions

FaceReader History

FaceReader is the world’s first tool capable of automatically analyzing facial expressions, providing users with an objective assessment of a person’s emotion.

In 2007 FaceReader 1.0 was released. Since then, a new FaceReader release has been brought to the market on annual basis, with FaceReader 9 being the current version released in October of 2021. With every purchase of FaceReader you receive a complete software package with full customer support offered by VicarVision’s partner, Noldus.

FaceReader Clients

FaceReader is used worldwide at more than 1000 universities, research institutes, and companies in many markets, such as consumer behavior research, usability studies, psychology, educational research and market research.

Request a QuoteClassifications

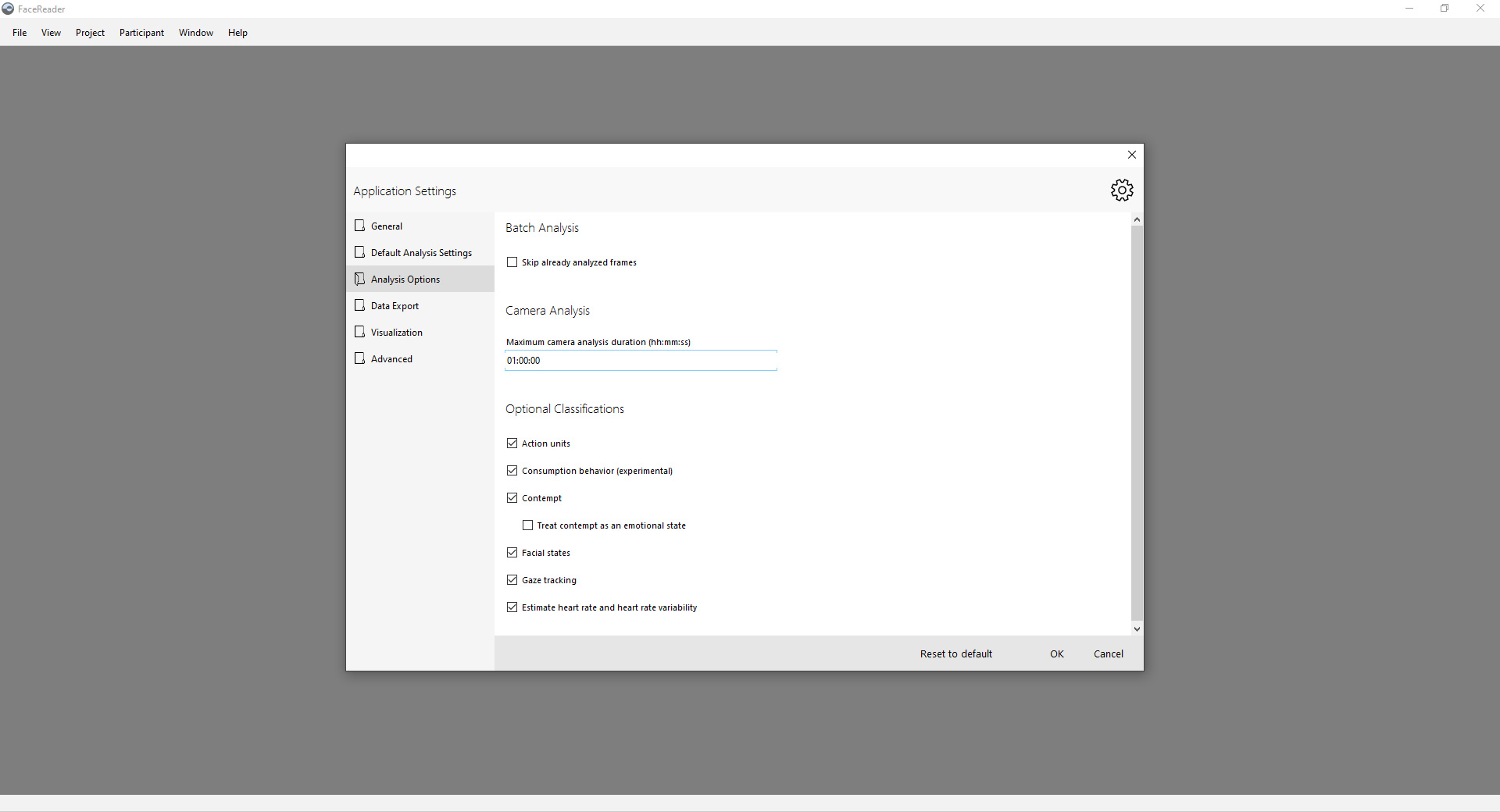

FaceReader is capable of reporting many different type of classifications of the face

-

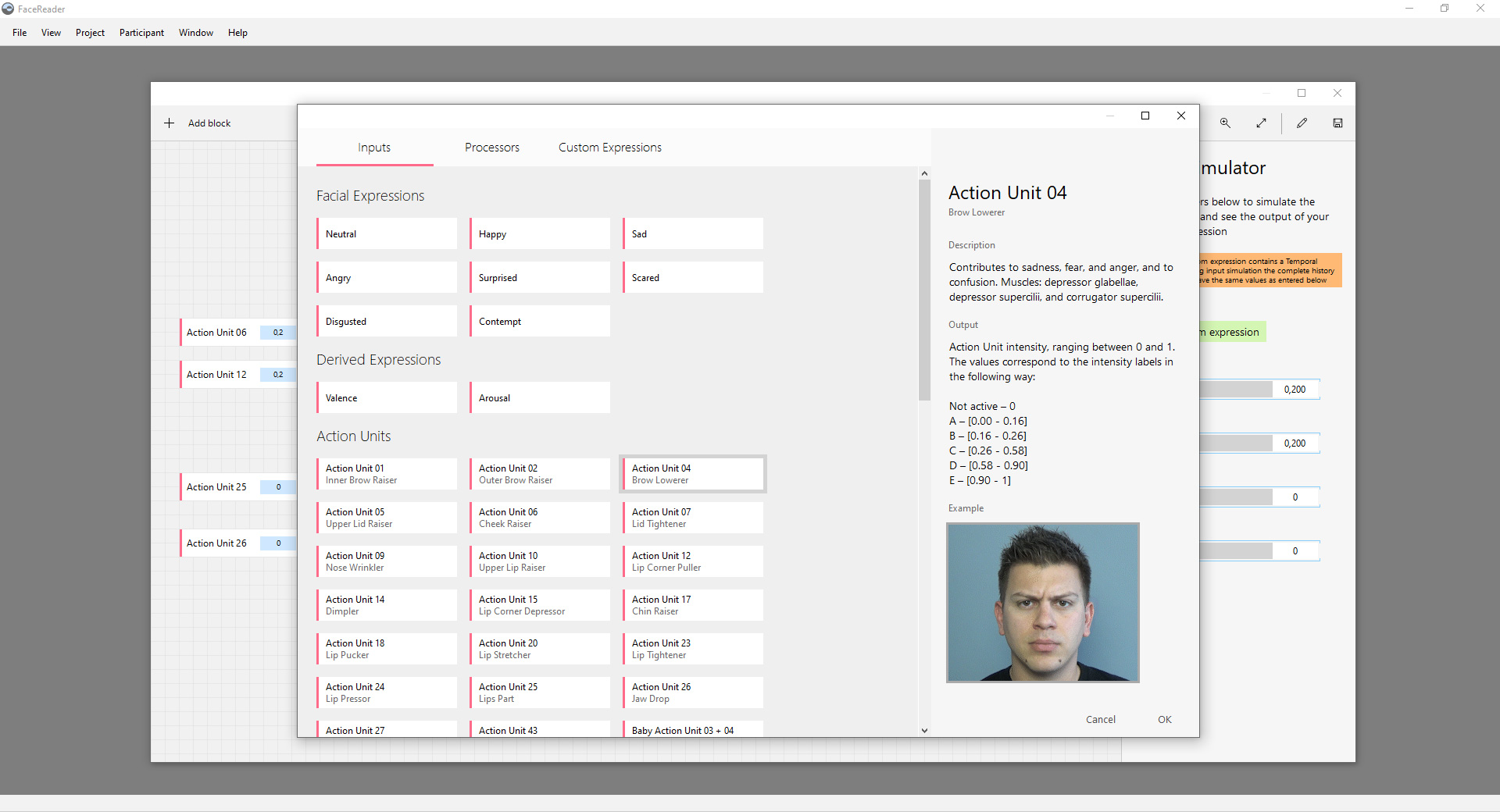

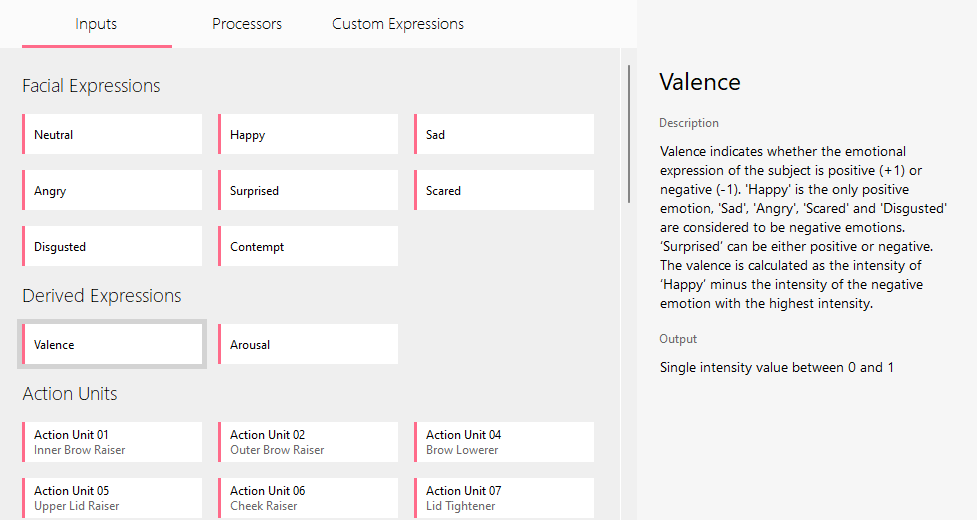

Facial Expressions

Happy, Sad, Angry, Surprised, Scared, Disgusted, Contempt and Neutral. -

Valence

A measure of the attitude of the participant (positive vs negative). -

Arousal

A measure of the activity of the participant (active vs inactive). -

Action Units

20 of the most common Facial Action Units.

-

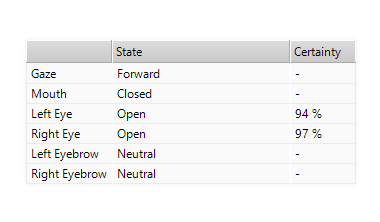

Facial States

Eyes opened/closed, Mouth opened/closed, Eye Brows lowered/neutral/raised. -

Global Gaze

A global gaze direction (left, forward or right) helps to determine attention. -

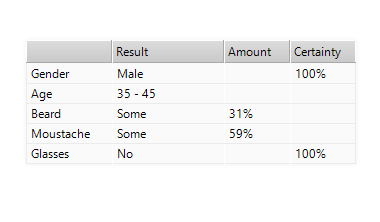

Characteristics

Gender, Age and the presence of Glasses, a Beard and a Moustache. -

Head Pose

Accurate head pose can be determined from the 3D face model.

-

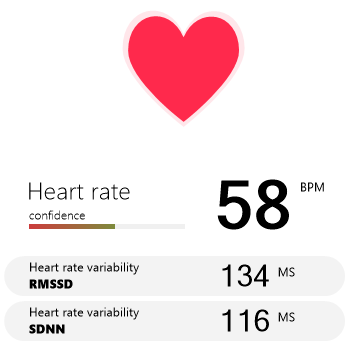

Heart Rate

FaceReader can detect you heart rate using just a simple webcam. -

Heart Rate VariabilityNEW

The RMSSD and the SDNN Heart Rate Variability. -

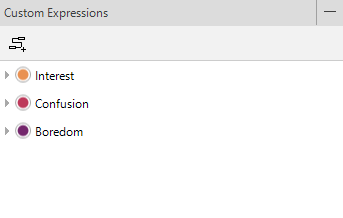

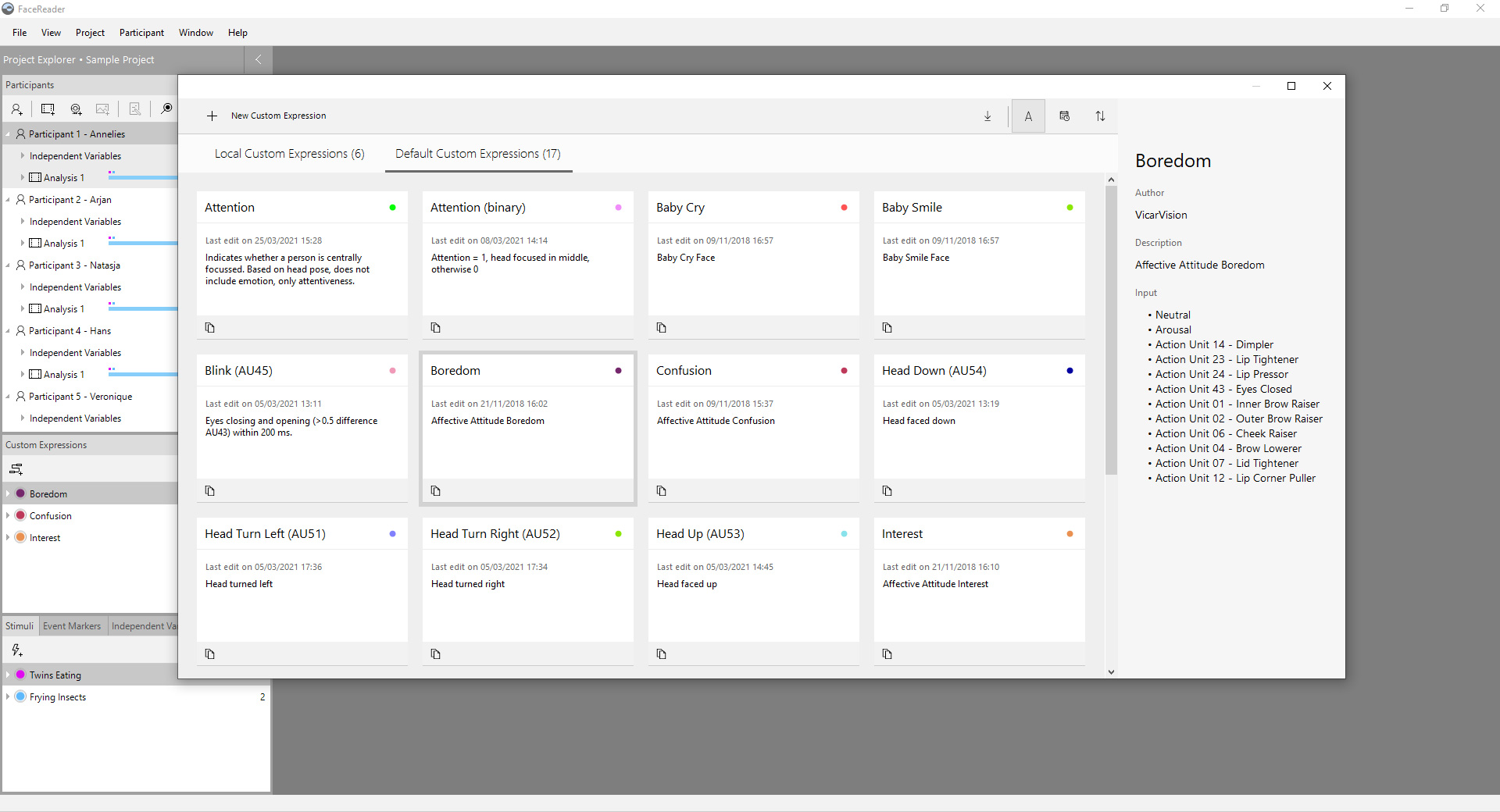

Custom ExpressionsNEW

You can create your own custom expressions using the built in designer. -

Head Positioning

Using the 3D head model, we can report the exact positioning of the head w.r.t. the camera

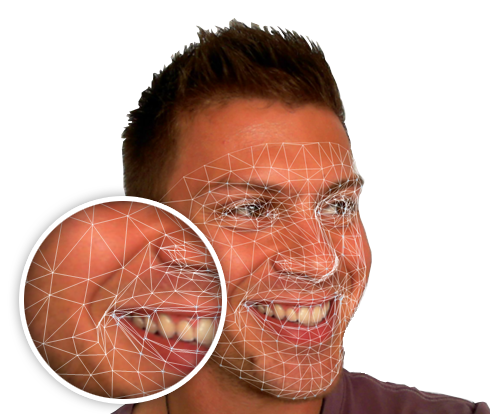

3D Face Modeling

Realtime 3D Modeling

FaceReader uses an advanced 3D face modeling technique, with over 500 keypoints. The system is capable of modeling a face in realtime, without any manual initialization needed.

It’s superfast too. On most modern PC’s it can run faster than realtime.

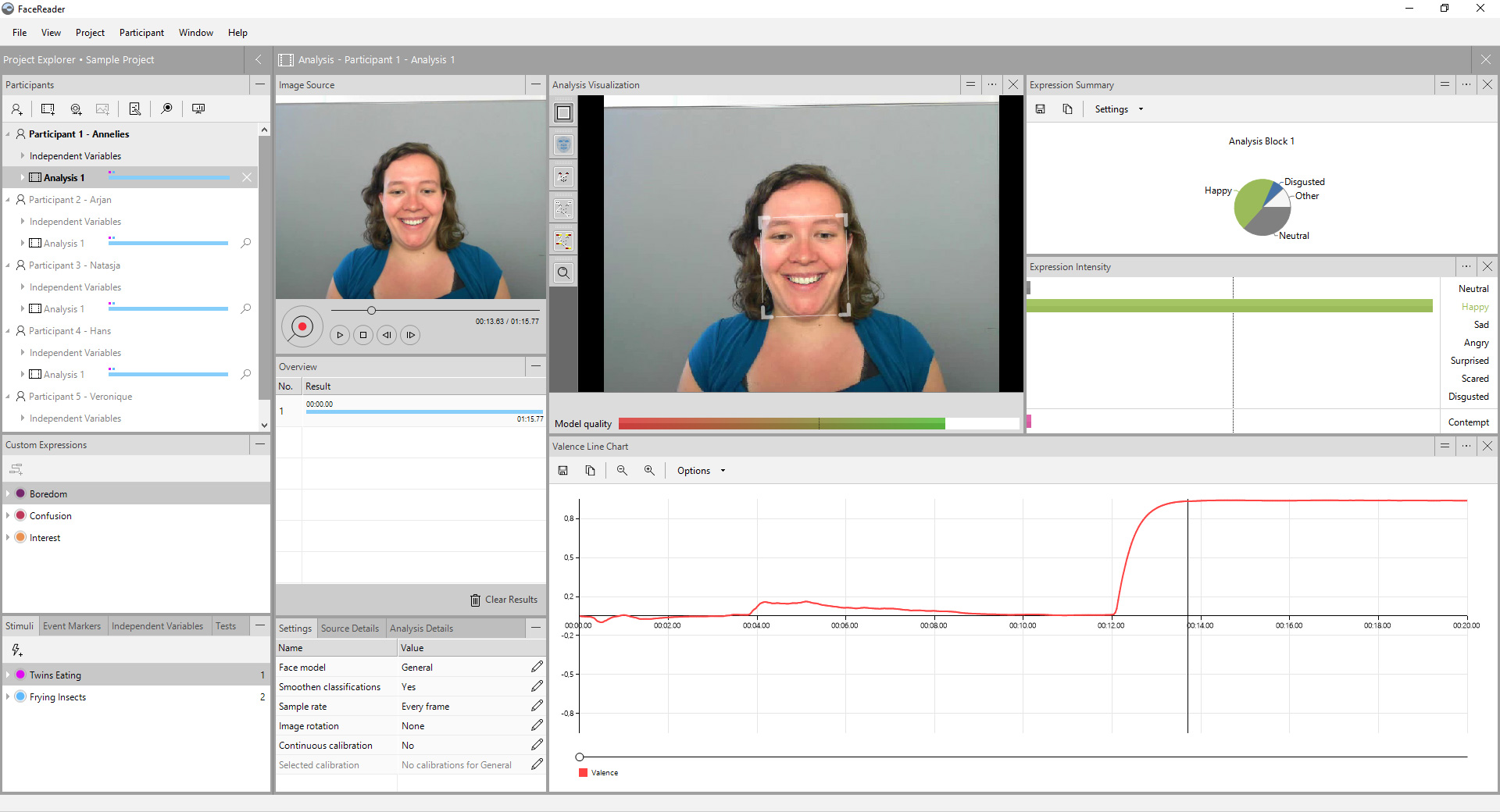

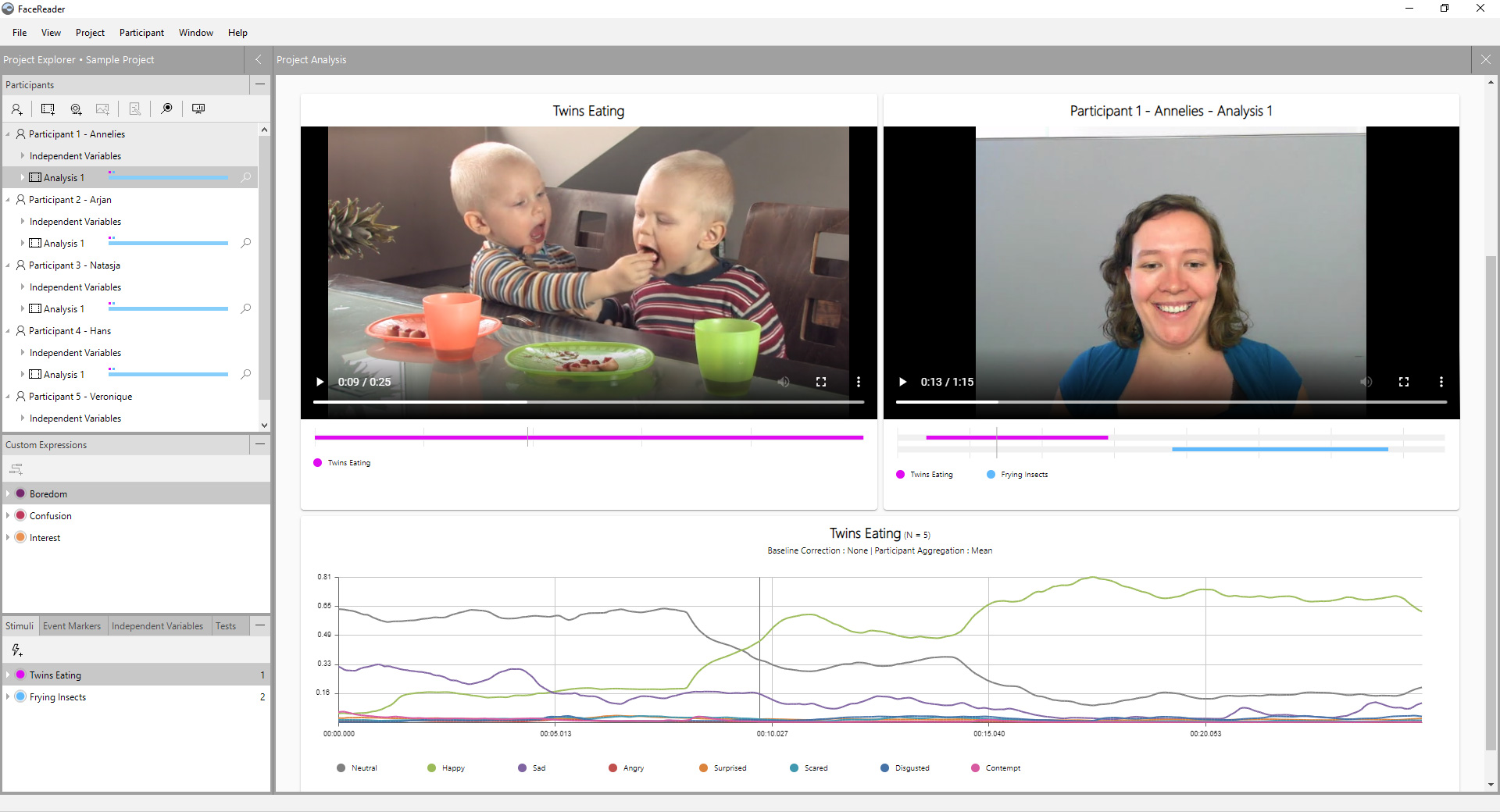

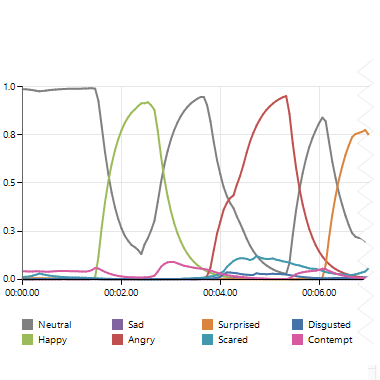

FaceReader Output Visualization

FaceReader contains a wide variety of visualization options, to make the data easily accessible for the researcher.

Continuous Expression Intensities

FaceReader outputs the 6 basic expressions, Happy, Sad, Angry, Surprised, Scared, Disgusted and an extra Neutral state as continuous intensity values between zero and one.

New in FaceReader is the addition of Contempt as the 7th expression.

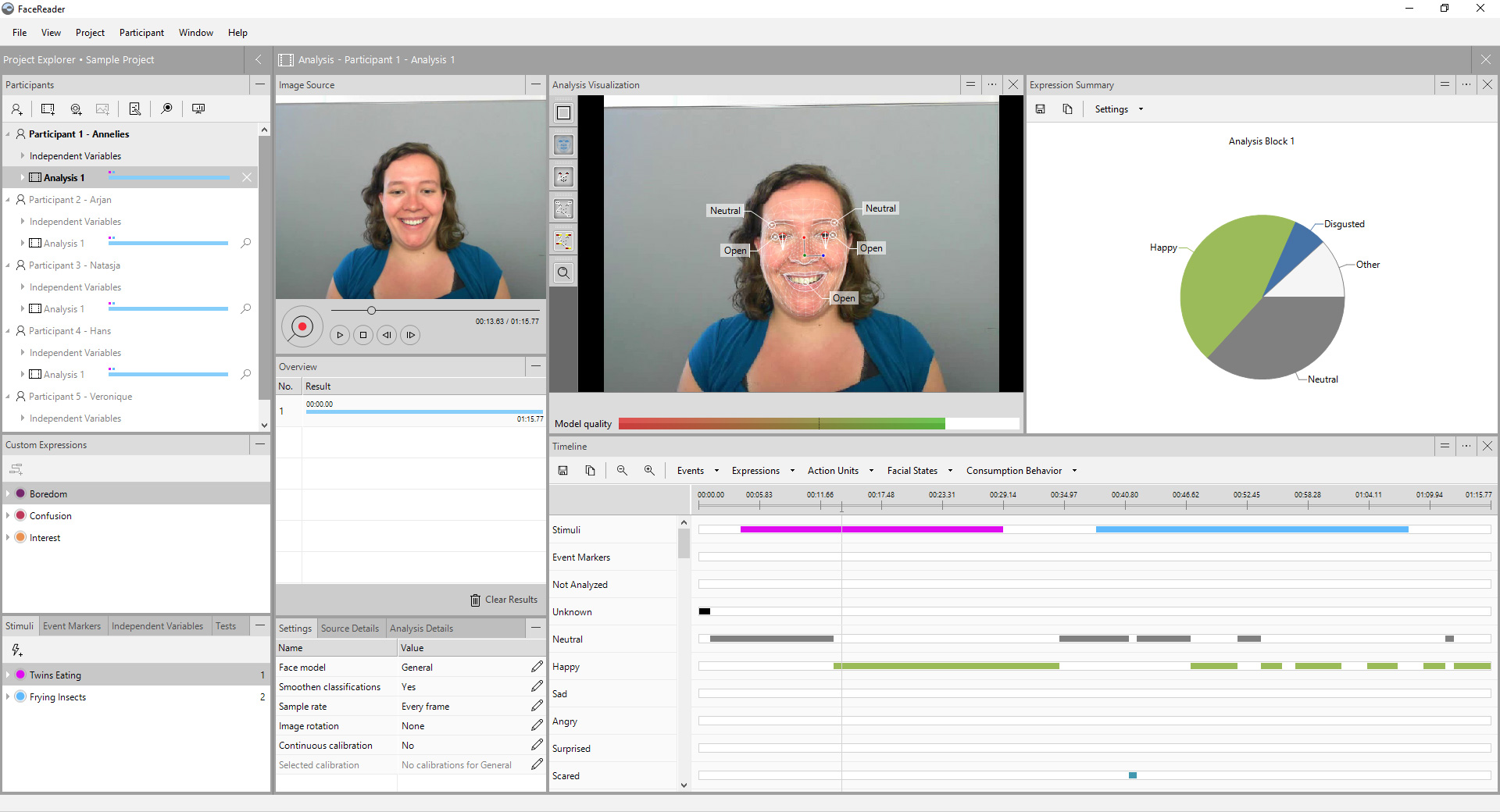

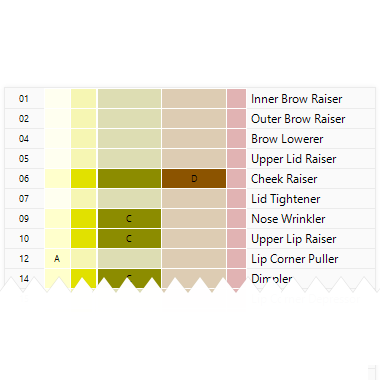

Action Unit Detection

The six basic emotions are only a fraction of the possible facial expressions. A widely used method for describing the activation of the individual facial muscles is the Facial Action Coding System ( Ekman 2002).

FaceReader can detect the 20 most common AUs.

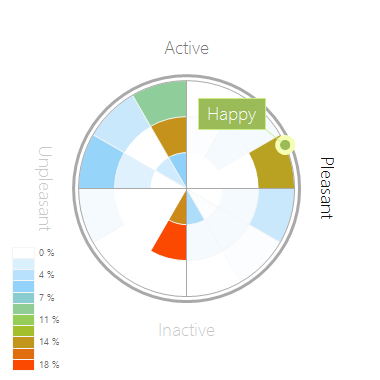

Circumplex Model of Affect

The circumplex model of affect describes the distribution of emotions in a 2D circular space, with arousal and valence dimensions.

Circumplex models (Russel 1980) are commonly used to assess liking in marketing, consumer science, and psychology.

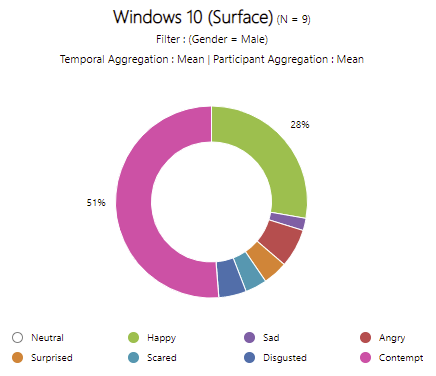

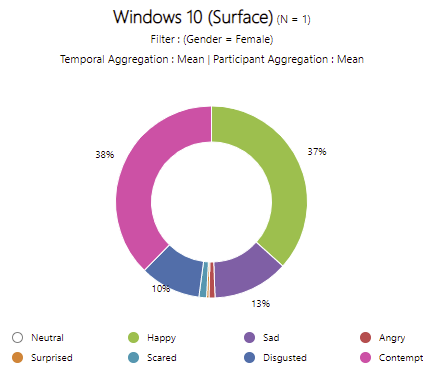

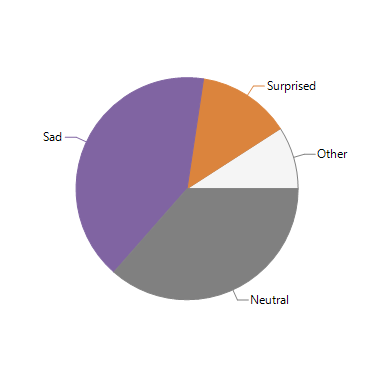

Expression Summary

A summary of the expressions during a single analysis can be viewed in a easy understandable pie chart, showing overall responses.

Different subparts of the analysis can be selected to view the summary of the expressions.

Heart Rate

The current heart rate, including the Heart Rate variability (both RMSSD & SDNN).

Facial States

FaceReader can automatically classify the state of some key parts of the participants face.

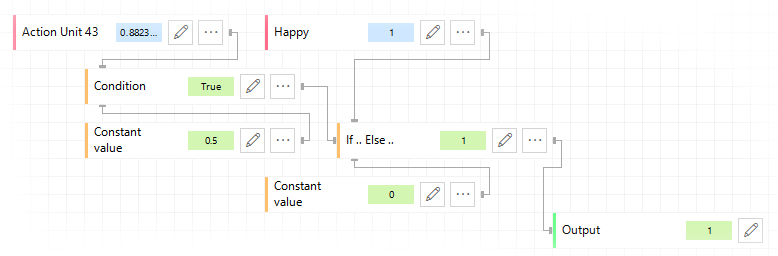

Custom Expressions

Advanced modeling of your own expression definitions.

Make your own

You can design your own algorithms for analysis of workload, pain, embarrassment, and infinitely more by combining variables such as facial expressions, Action Units, and heart rate.

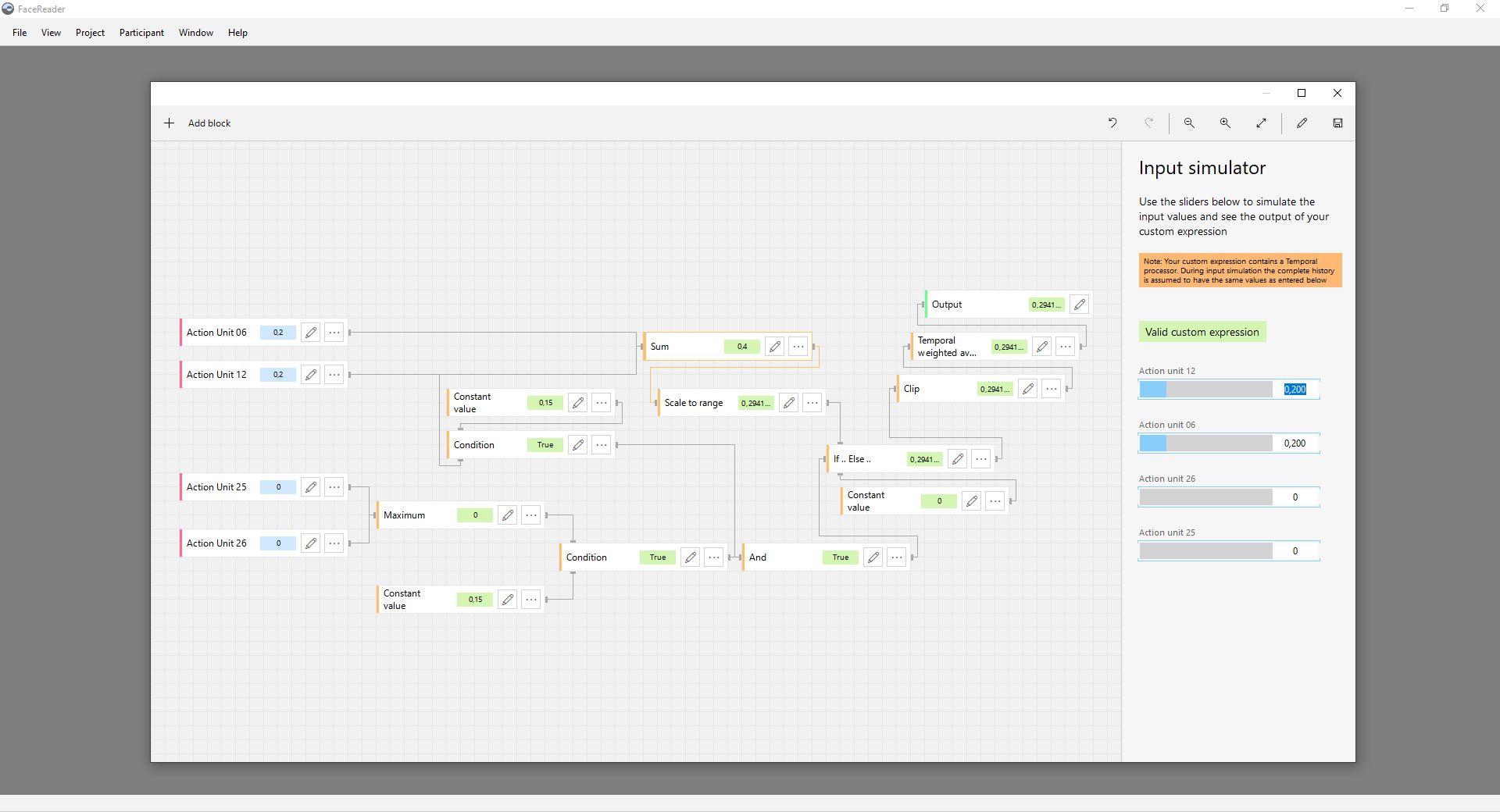

Input Blocks

All the classifications are available as input blocks. You can use the Facial Expressions, Valence & Arousal, Action Units, Head Orientation, Head Position, Gaze Angles & Heart Rate

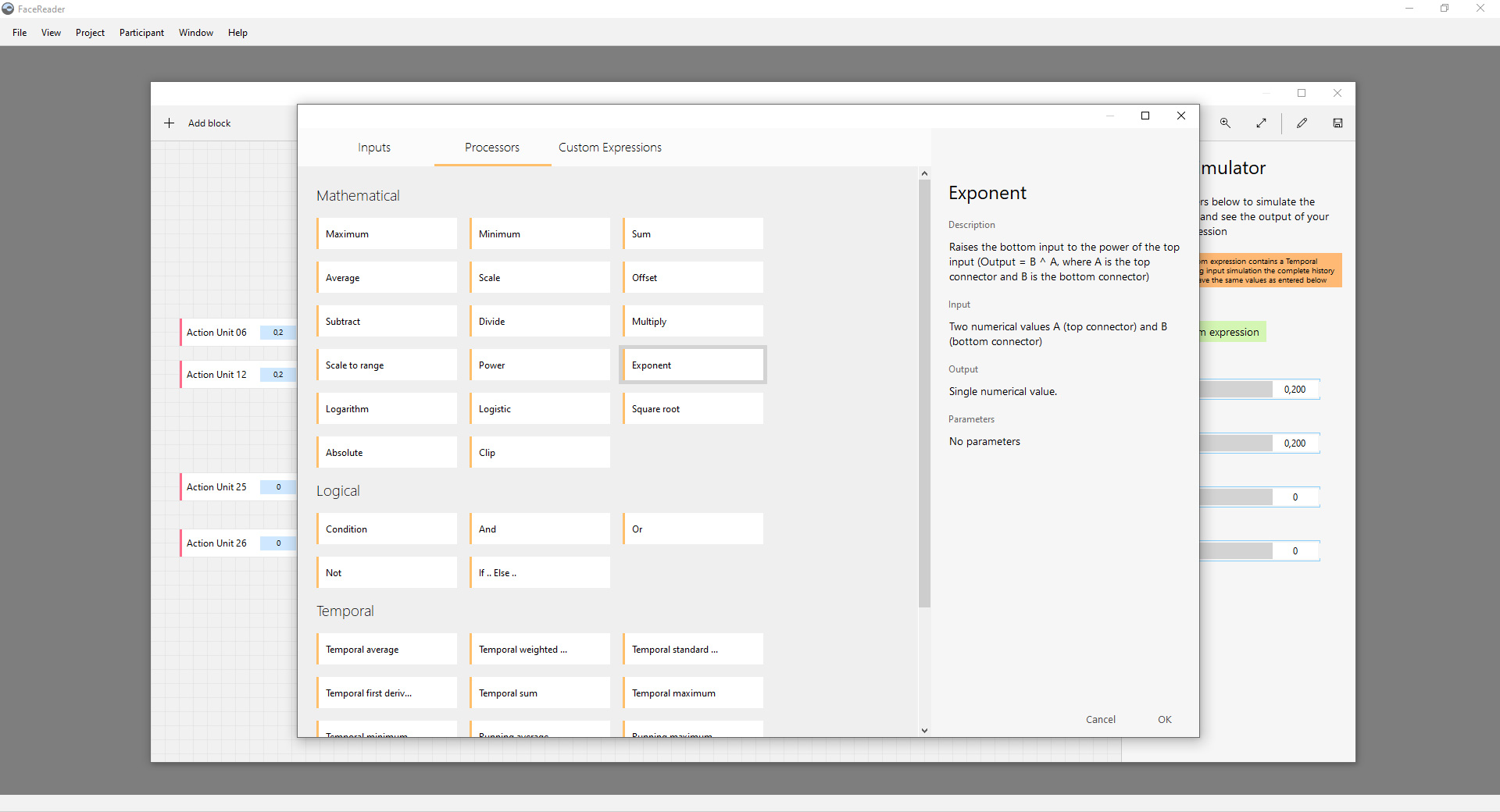

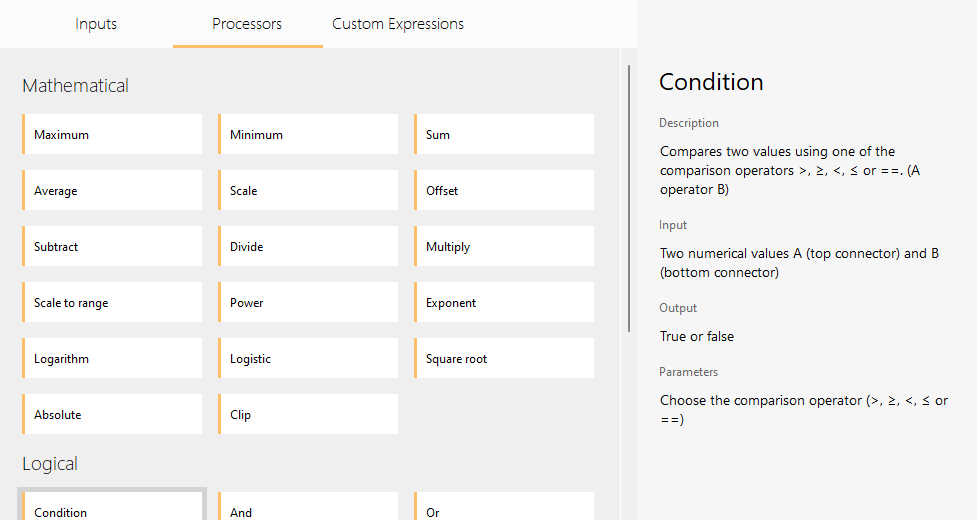

Processor Blocks

The processory blocks allow you to process the input blocks. These contain mathematical operations like for instance Maximum, Minimum, Sum, Average, but also Logical Operations like AND and OR and If.. Else.. statements.

You can do temporal averaging and other operations too.

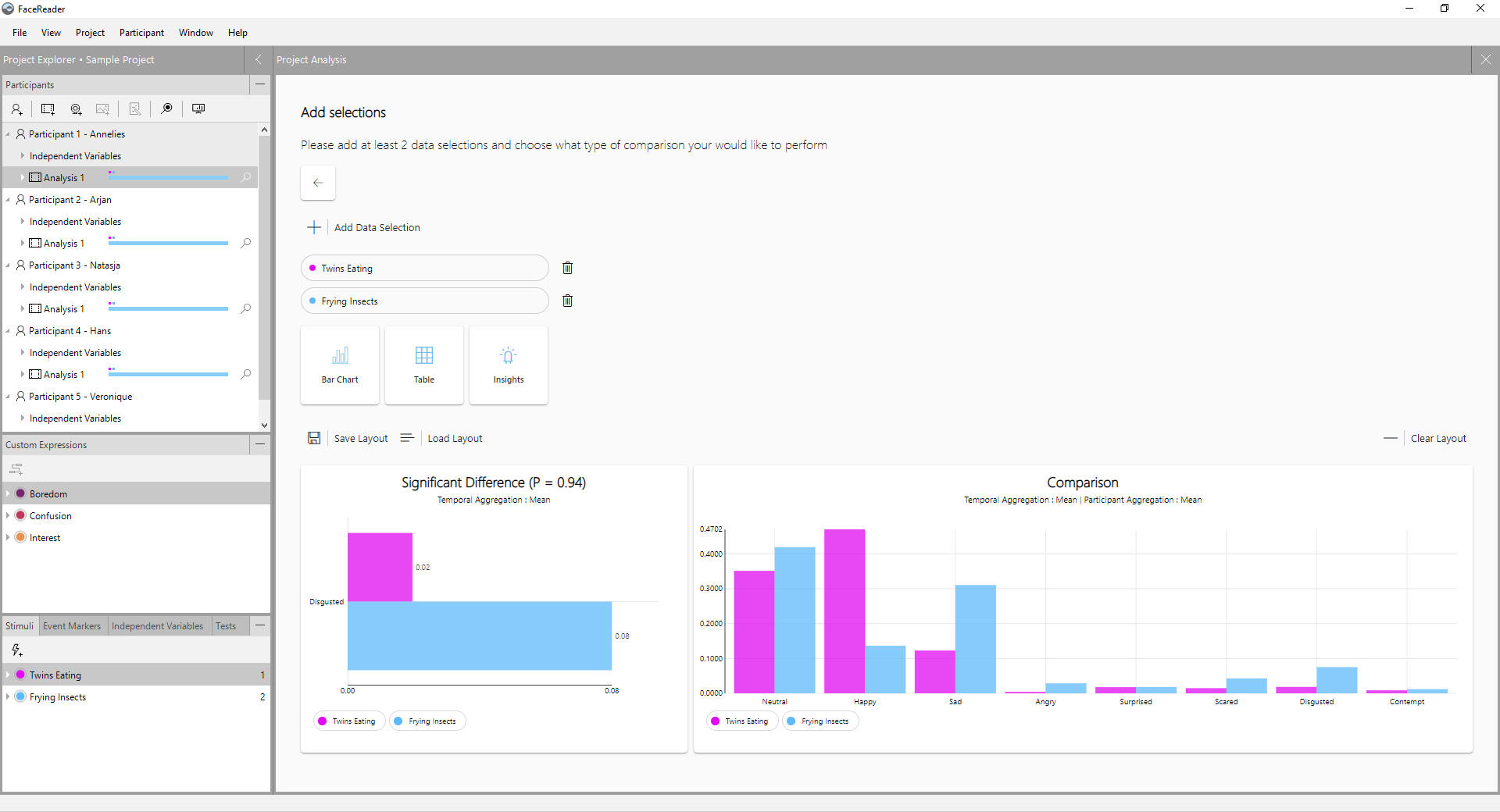

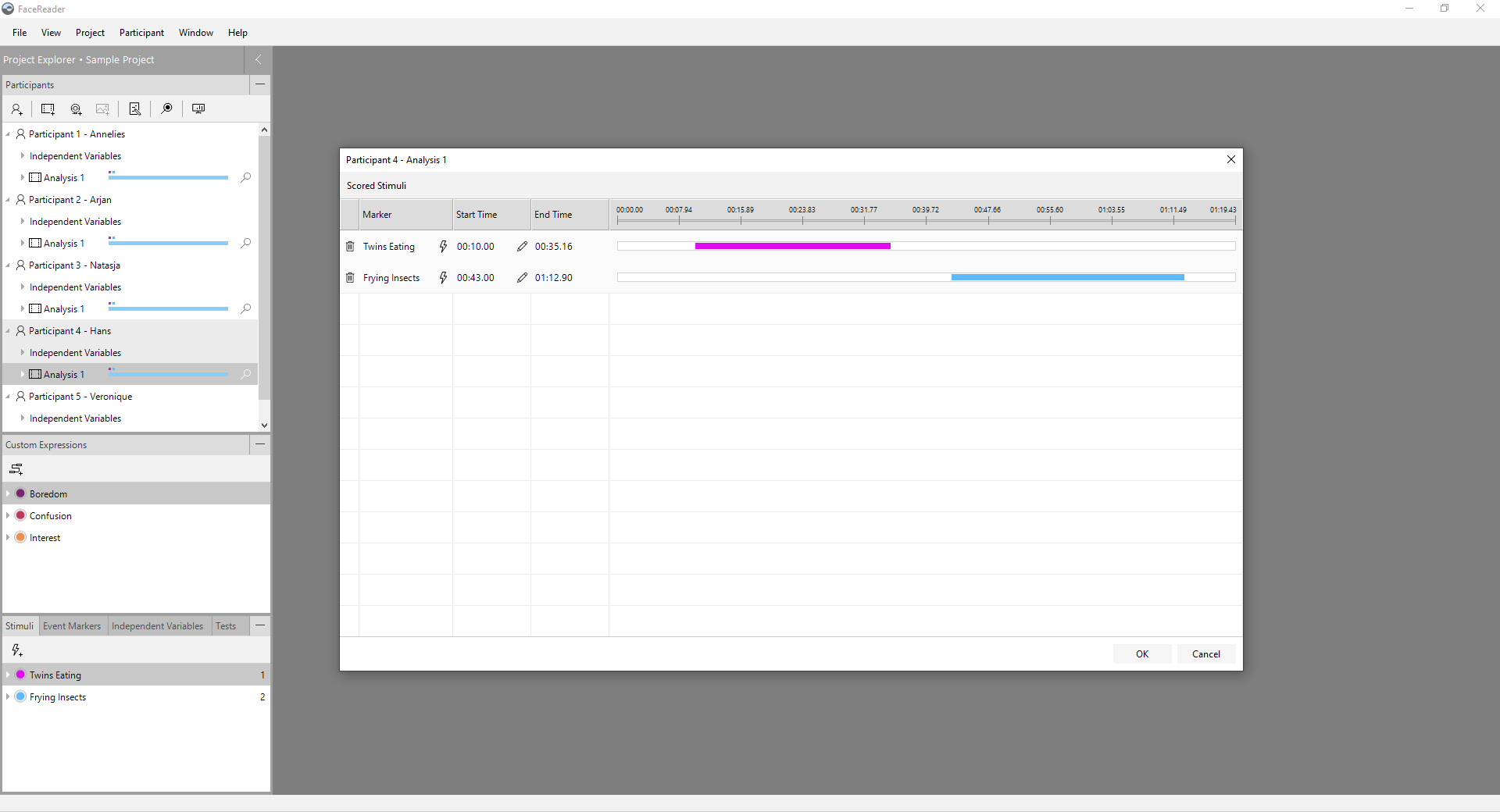

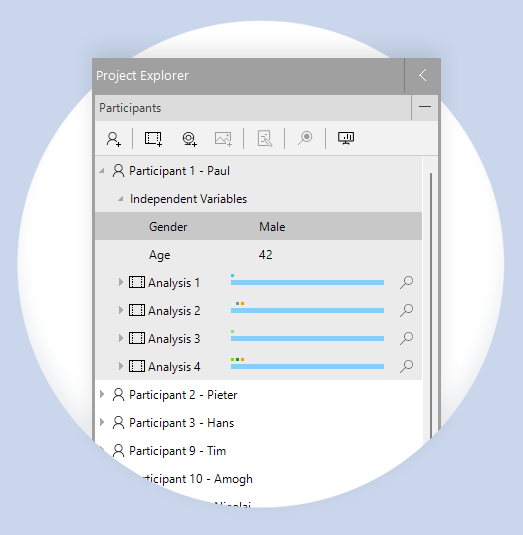

Project Analysis

Get results of your participants combined.

All your participants in one project

In FaceReader you can (re)create your complete experiment, adding all your participants to one single project. The Project Analysis Module allows for analysis of the response of groups of participants, towards your stimuli.

Participants can be grouped based on independent variables, like age, gender or any manually entered variable.

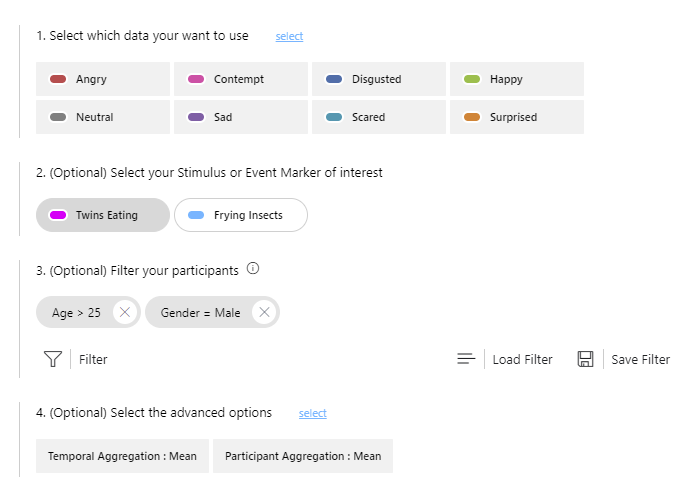

Select & Filter

Fine grained data selection and participant filtering allows you to get insights for subgroups within your data.

Compare

Quickly see the difference between subgroups or between stimuli.