Student project with Emotion Recognition Software for Online Videoconferencing

Since the pandemic online meetings have become a widespread phenomenon. During these meetings it is difficult to keep track of all nonverbal behaviour. We have used our research software FaceReader to develop a new tool “VICO” to gain Visual Insights for Video Conferencing. By measuring attention, emotional expressions and reactions of the members in the call one can better understand them. This will help optimize online meetings, such as online focus groups. Students from the University of Twente Finn Prinsenberg and Michel Schmale have used the tool in a very interesting design study to help users learn to read the room in an online environment. This is a short reproduction of their project.

Aim of the project

The general aim of the project was to understand and enhance videoconferencing. How can one empower the different users to ’read the room’ in a similar way to meetings in person. To do this, the idea is to create an object which uses state of the art Emotion Recognition (ER) to convey the general emotional state of the group to each individual within the video conference. This is done by using colour changing lights inside the object, with several colours representing different emotions.

In the first stage of the design process a few ideation sessions were conducted, with the goal to find an idea for a tangible object that could improve the users sense of the groups emotions. That way different possibilities were discovered and compared on what would be the most promising direction for reaching the stated goal. The final idea was then prototyped in multiple iterations.

Prototype

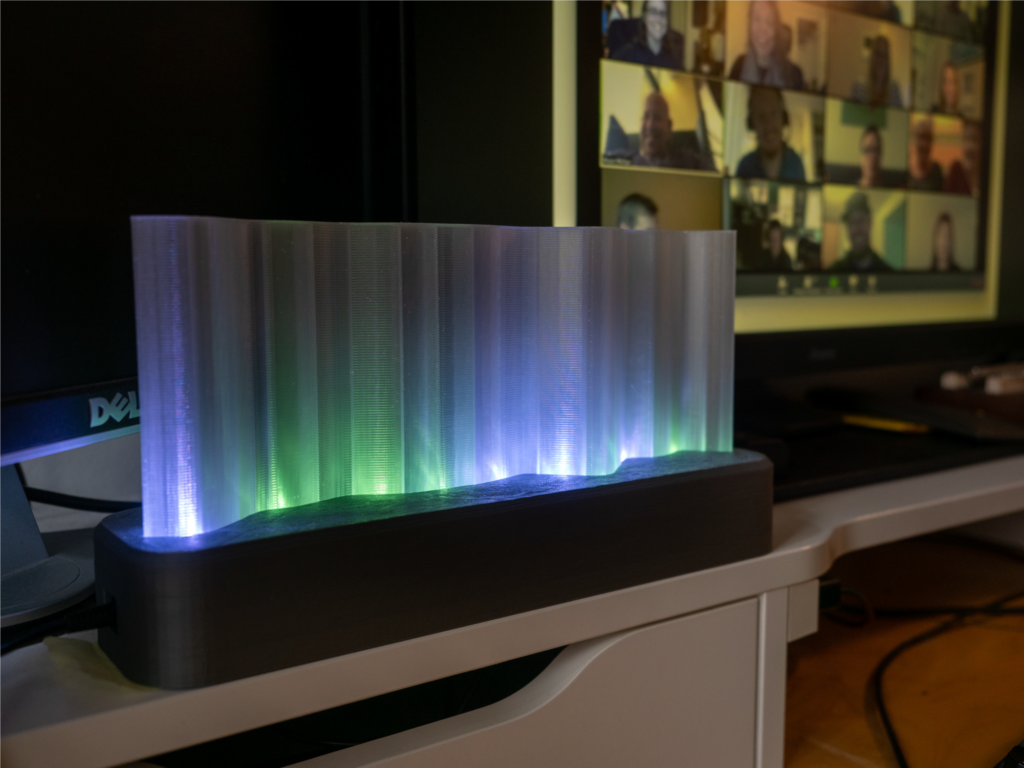

During the design process and the subsequent tests we learned two important lessons. 1. The physical shape of the object and the animations of the lights really mattered (e.g. a cloud was associated with negative emotions). 2. Instant readout (0.5s) from the ER software on the object leads to a noisy and chaotic display of colours which is again to distracting to stay in the background of the user. With a delayed output and an averaging of the emotion values over a longer period (3s) the object can stay more in the background of the users vision.

Resulting from the lessons learned in the prototyping stage we designed a final prototype. Here the shape of the object is modelled like an aurora, having identified this as a concept which doesn’t have a common association with emotions. The light illuminates the transparent part of the object. The whole object is big enough to be noticeable in the peripheral vision of the user. The final prototype works by recognizing the facial expressions of each member in the video conference and sending that information into the object where the distribution of emotions is displayed with the corresponding colours. The pictures below shows a first person view of the prototype. With our proposed design we were aiming to improve ”reading the room” for people using video conferencing. The final object created is able to display emotions, recognised by ER software, in the form of coloured light. It will require further research in order to learn if the object really helps users to read the room better.

If you want more information on the project or are interested in using the tool, send us an email!