EyeReader – Webcam-based Eye Tracking Technology

Our eyes are our windows to the world. There is more behaviour from the face that gives relevant information other than facial expressions. Our gaze reveals what we are looking at and this often indicates what we find important. The size of our pupil responds to variations of lighting but also to task demands. As part of the MIT EyeReader project together with our partner Noldus, we have researched webcam-based eye tracking and pupil analysis. Standard eye-trackers use infrared cameras to assess these eye metrics and thus require specialized hardware. Eye tracking analysis from a webcam will make the applications a lot more flexible. We will continue developing the technology, but here we describe the validation results of two of the most important components of the successfully completed project.

Assessing pupil size: a challenging task

For the pupil diameter estimation two methods were implemented and analyzed: one based on using a classic computer vision (classic CV) method and one based on deep learning. Both methods segment the iris image, such that the region in the middle of the iris image approximates the eye pupil (see examples of the processing steps for deep learning method (above left image) and classic CV method (right image). For testing manually annotated pictures and Tobii nano pro

pupil diameter output was used. There was a difference in the amount of images that could be analysed, with the deep learning method analysing 100% and classic method 70%.

Over the whole dataset the results are very mixed. While some tasks in some participants show a strong correlation (r = 0.86) between the measured pupil size and the ground truth, others show no correlation whatsoever. There is on average a moderate positive correlation and the two methods performed similarly (DL: r = 0.39 vs. CV: r=0.37). In several participants a strong positive correlation was found, but these all had blue eyes and no glasses. The presence of a strong contrast between the pupil and the surrounding iris is important for pupil size estimation. This is a challenge for darker eye colours and glasses covering the pupil with light reflections. In comparison, infrared light has an advantage as the invisible infrared light allows illumination of the iris without interfering with the size of the pupil. Another difficulty is the lack of suitable datasets. Most available datasets only exist in infrared, which lack the characteristic problems with visible light.

Our research shows pupil diameter estimation from USB camera’s is possible in certain cases, but not ready for implementation. Future research could optimize the current method by increasing the amount of available data and further improving the pre-processing steps.

Gaze tracking: ready for implementation

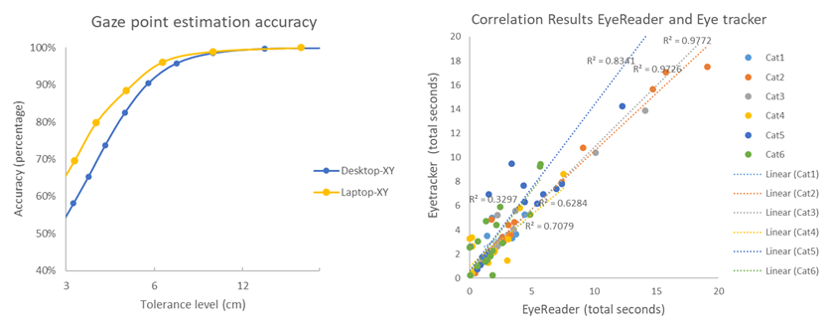

The EyeReader algorithm estimates the gaze direction and relates that to an image on the screen. The neural network is trained with many labelled datasets of screen locations and video recordings to learn the relation between the image of the eyes and the gaze vector. With the help of a calibration task in which the participant follows dots on a screen, 2d x and y points on the screen can be mathematically decoded. Tests with our own validation dataset show that the system predicts gaze points on screen with an average deviation of 2.4 cm (average of 5.2% deviation of the screen). This is comparable to other competitive systems on the market. The results show that the accuracy was slightly higher for the task performed on the laptop compared to a separate screen (see left image below). This is likely due to the size and fixed position of the laptop. There were no big differences between blue and brown eye colour. Glasses, when they are thick and there is a light reflection, can in some cases reduce the accuracy of the results.

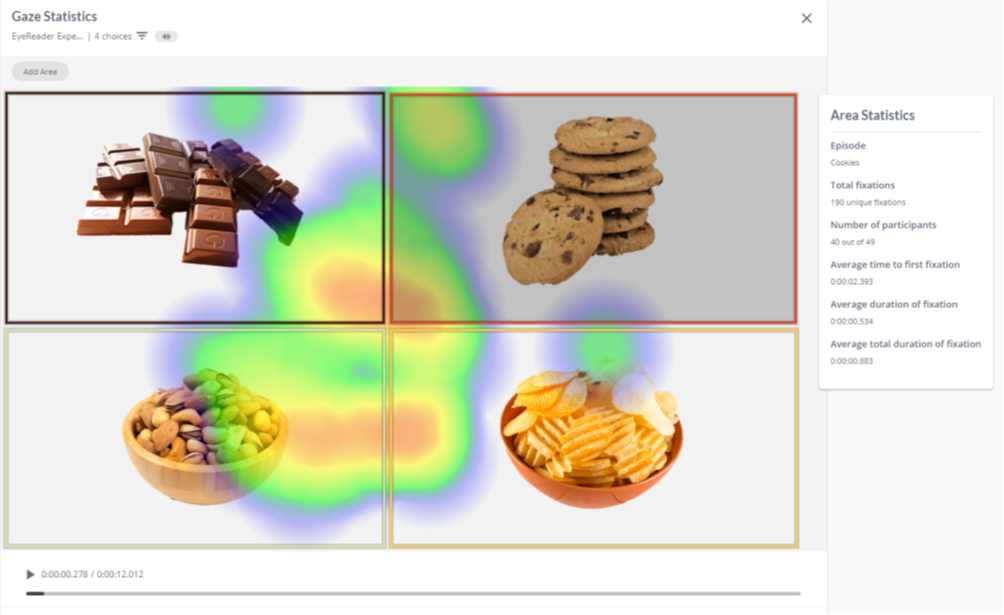

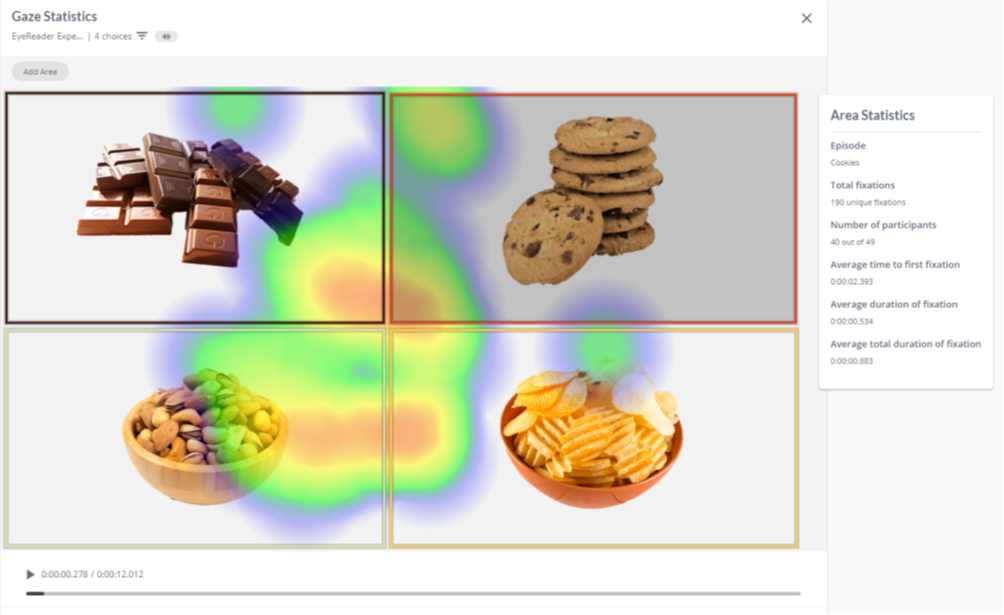

When comparing EyeReader to gaze estimations of the Tobii Nano eye tracker very strong correlations where found between the estimation of total fixation duration for each category (see image above on the right). More detailed results of the gaze estimation validation study can be read in the white paper (available via Noldus). The results show EyeReader is very suitable for researching realistic consumer behaviour where you expect clear discernible effects. You can use EyeReader in a flexible and mobile lab environment or within the FaceReader Online platform for online testing (see example of a heatmap below).