Author Archive

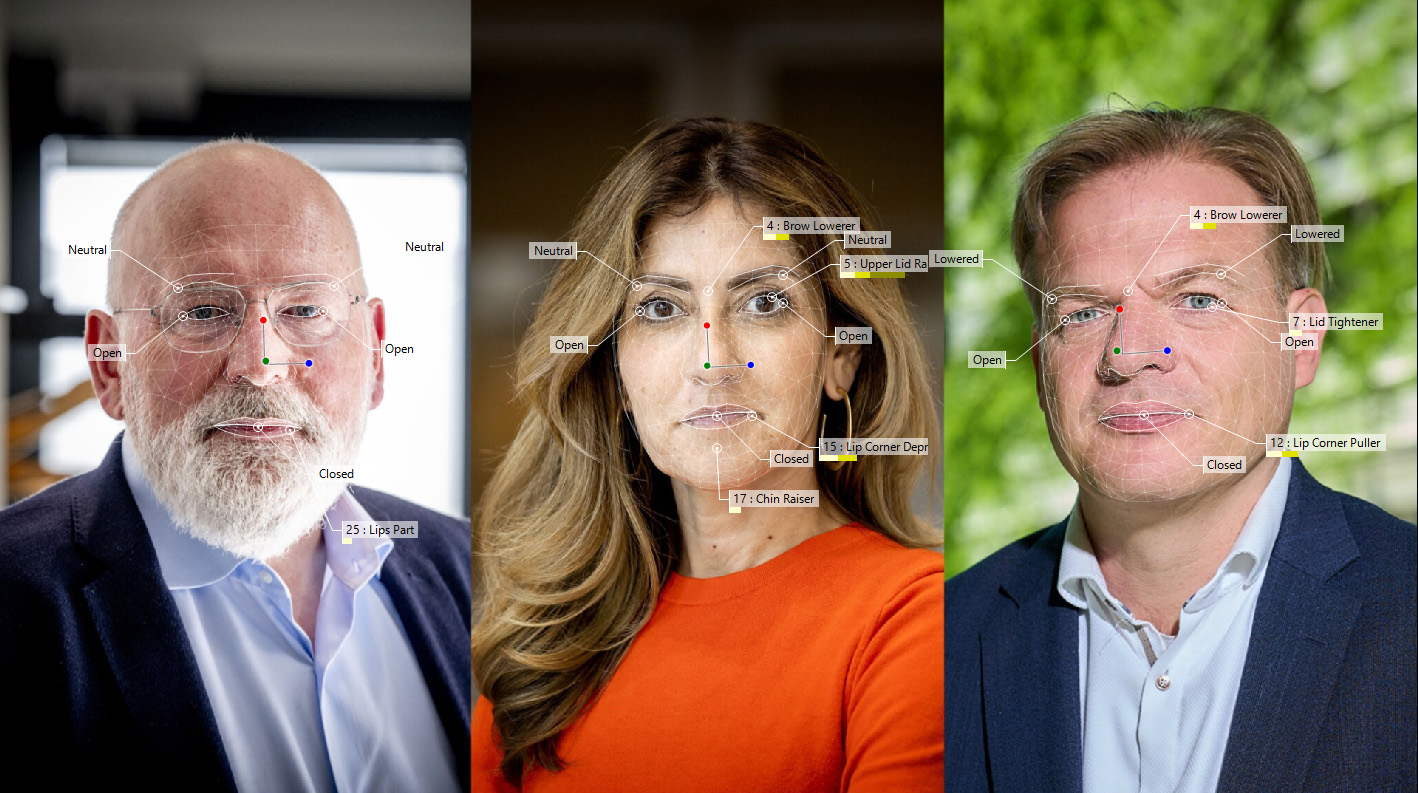

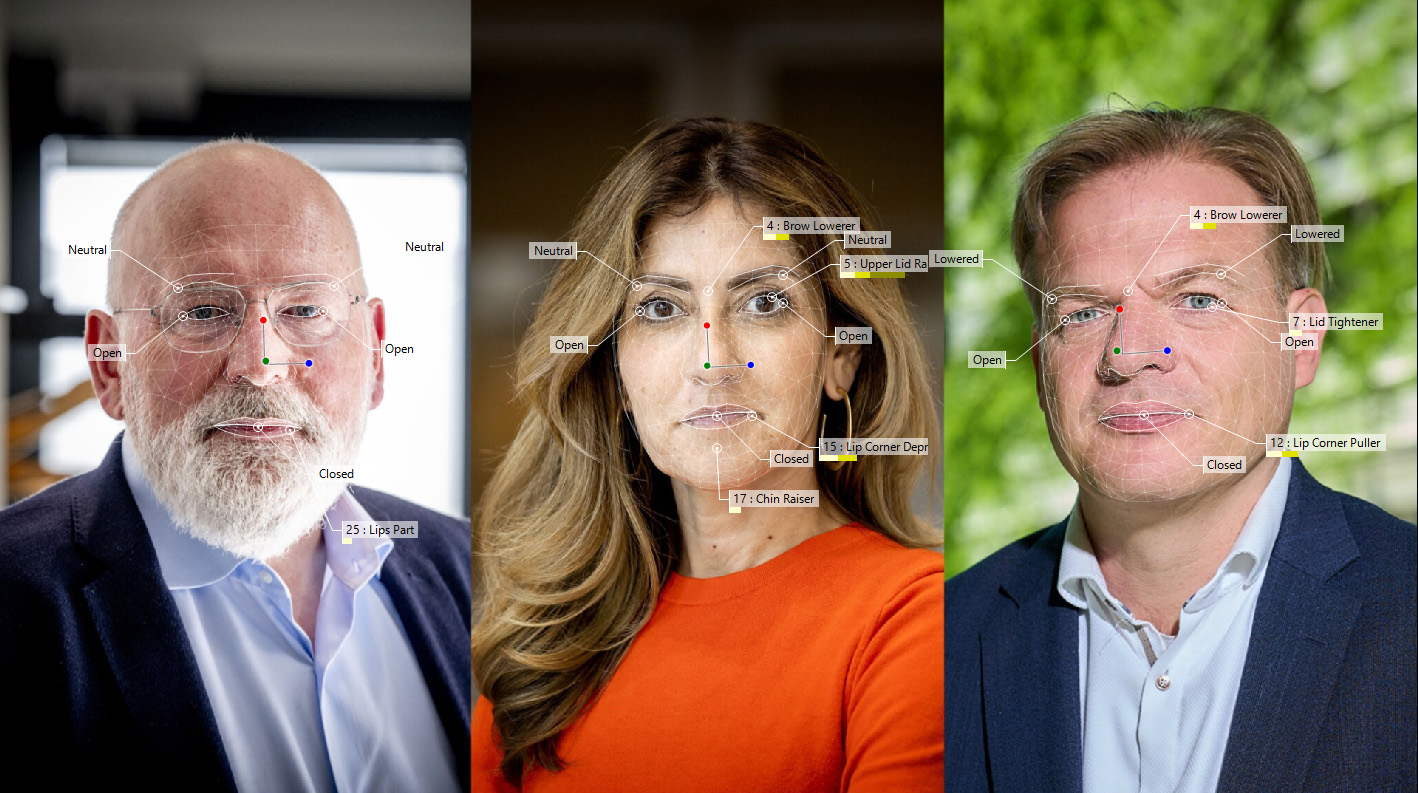

Introducing FaceReader 9.1: Enhanced Facial Analysis for Greater Precision and Functionality

AID2BeWell – Project Results of Social Robot Prototype for Older Users

New website for CAS research project on injury-free exercise

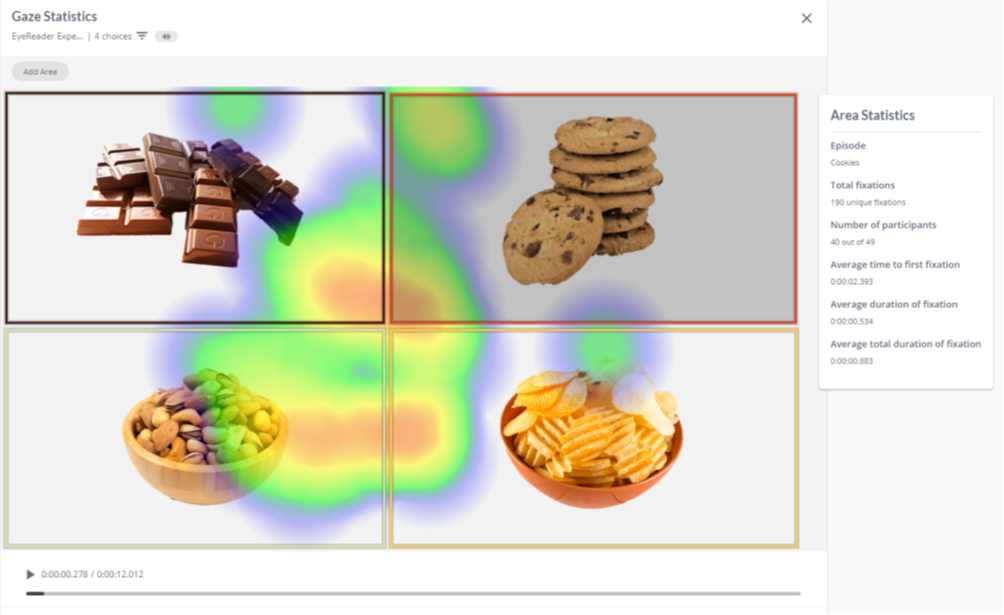

EyeReader – Webcam-based Eye Tracking Technology

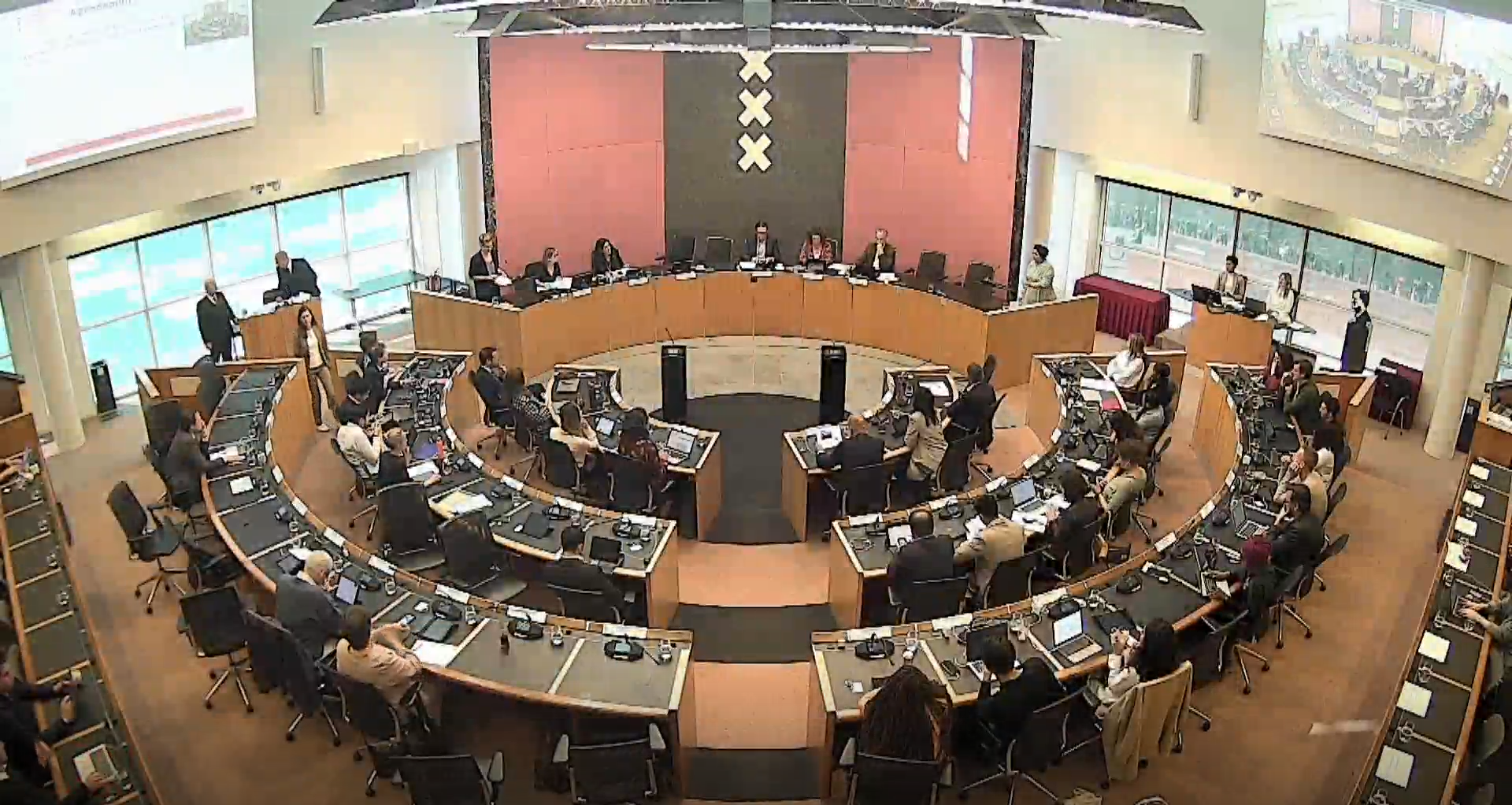

AI Aid for Bite Sized Debates

Student project with Emotion Recognition Software for Online Videoconferencing

FaceReader 9 Release – Improved Analysis Performance and Possibilities

Presenting Posters at Online AI Conferences